High Performance Magento on AWS

This year I've had the challenge of running a legacy Magento 1.9 Community Edition installation on AWS. This post outlines some of the key learnings I've gathered over the past 3 months and the solutions we've taken to improve them. In general I find Magento 1.9 to be very reactive software and obviously this makes sense considering it was designed in the pre-cloud era. However I've found a number of solutions to significantly improve its performance.

Update: Check out MageCloudKit - The High Performance Stack for running Magento on AWS.

Architecture Overview

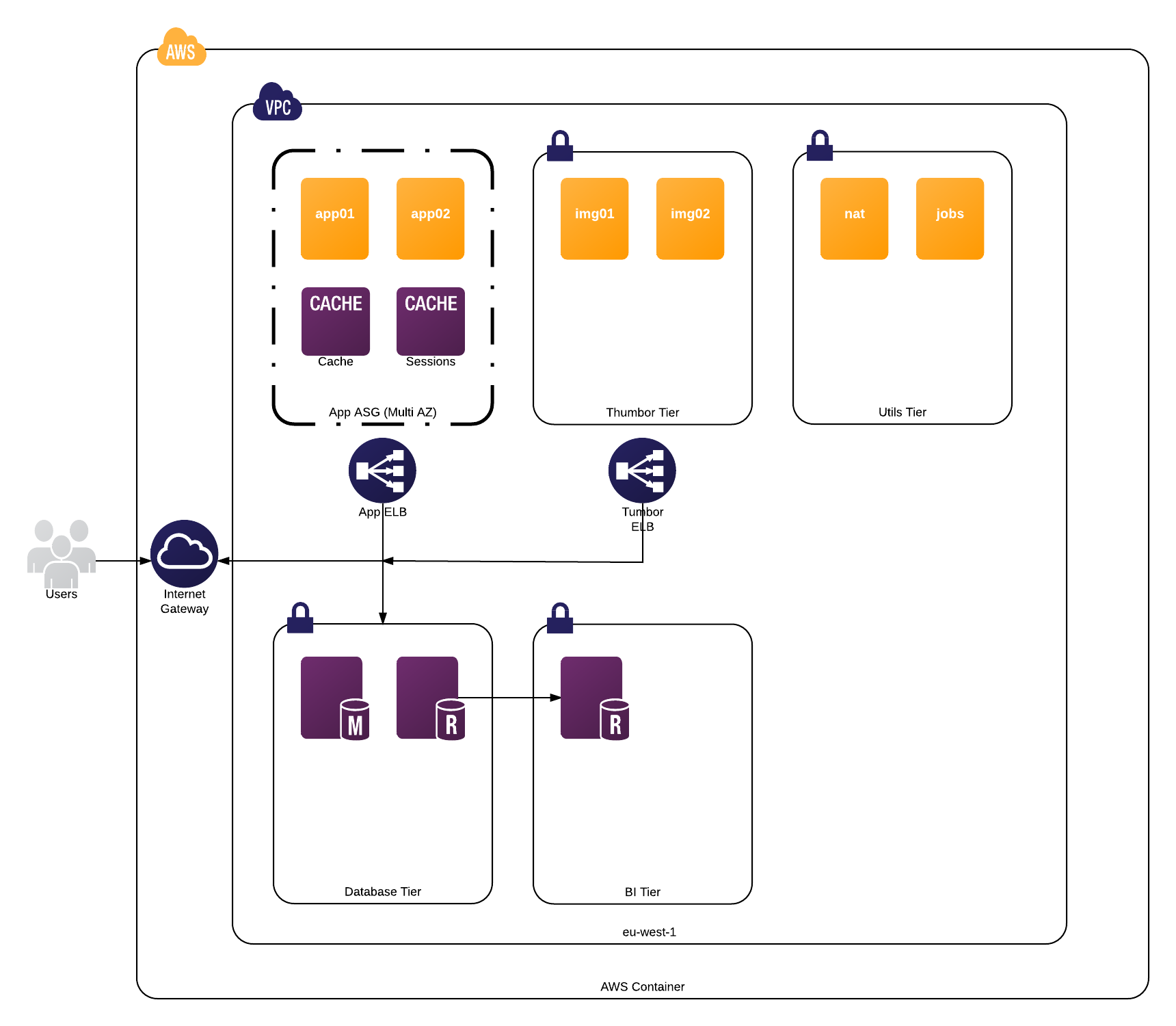

We run a production environment very similar to the architecture I have illustrated below.

Our resources all reside inside an AWS VPC. The application is packaged inside a Docker container which is then deployed onto app servers in an autoscaling group. Two Redis instances are used for caching purposes, RDS with Multi-AZ replication enabled (with a read-only slave for analytics) and Amazon EFS for media asset storage.

For deployment we make use of my [Terraform rolling deploys strategy]({% post_url 2016-02-19-rolling-deploys-on-aws-using-terraform %}). I duplicate this whole environment between staging and production.

Our approach is designed to favour network services including all of the relevant AWS products and avoid high disk I/O where ever possible.

Service Discovery

We use Amazon Route 53 to provide a very primitive form of service discovery using private hosted zones. I use Terraform to automatically create DNS records for all of our key services.

It looks very similar to:

- db.aws-region.internal

- redis-cache.aws-region.internal

- redis-session.aws-region.internal

- db.aws-region.internal

aws-region is replaced with our AWS region and internal is replaced with our company identifier.

Sessions and Cache

Redis stores our user sessions and the Magento cache as it provides a fast interface over the network. I strongly discourage using the default Magento file cache when running inside of a Docker container. It is also important to use two discrete Redis instances for maximum performance. Our deploys automatically bust the cache by incrementing a cache key. This approach also allows you to use multiple web-servers which you should be doing on AWS regardless.

PHP 7

Whilst Magento 1.9 does not officially support PHP 7 out of the box, I'd strongly recommend using it. You will need to install a compatibility plugin that overrides some core classes but the performance benefits are definitely worth it. Ensure the Opcache is enabled. More information on using Magento 1.9 with PHP 7 is available here: https://github.com/Inchoo/Inchoo_PHP7.

Media Storage

Many people recommend storing the media assets in the database when working in a multi-server environment. However a network filesystem can also solve the same problem. On AWS I'd recommend using Amazon Elastic Filesystem. It is now out of preview and generally available in certain regions. I'd strongly discourage using alternative solutions such as s3fs due to the lack of performance. EFS mounts as a simple NFS volume and grows and shrinks automatically.

It is important to note that by design Magento makes some very expensive calls to the PHP getimagesize()

function when trying to render frontend pages. We ended up deploying a

Thumbor cluster and overriding the Mage_Catalog_Helper_Image class.

This resulted in a significant performance improvement on uncached pages and I'd highly recommend following

the same pattern.

Offload Resources to a CDN

The static and media assets should all be served from a sub-domain with a CDN in front of it. Obviously setting the correct HTTP headers is a must so the assets are cached for an extended period of time. I have used both CloudFront and CloudFlare with Nginx behind to achieve this. Ensure the static assets are also minified with a token in the filename to bust caches on deployment.

Full Page Cache

A FPC with Magento is mandatory if your attempting to build a high-performance website. Magento is not very performant by design. The defacto solution is using the Turpentine Varnish plugin. However we've also had good results using a Redis cache backend. Using a crawler to warm your cache will prevent any visitor from having a negative experience.

After optimisation our App ELB latency is around 100-200ms when using an FPC.

Search

You should probably consider installing a plugin unless you want MySQL powering your catalog search engine. This will also improve the quality of results. We're currently using the Algolia Magento Plugin which is a hosted search solution. It adds about 150ms to our catalog search result pages which isn't a deal-breaker for us.

I haven't played with the other popular plugins for Solr, Sphinx or Elasticsearch but I'd recommend these if your interested in avoiding a network round trip.

Profiling

Profile the code with Blackfire.io or Xdebug and take note of the Magento specific metrics in the former. Be sure to disable the full-page cache when profiling for obvious reasons. Magento also has a built-in profiler but I am yet to play with it and quite honestly I wouldn't trust it.

Cron Jobs

Use a Jenkins server to run your cron jobs and don't execute expensive operations through the admin interface.

Monitoring

Theres no point running a cloud based Magento installation without keeping an eye on the numbers. When it comes to monitoring applications and services on AWS, Datadog is still my favourite product. Keep on eye on RDS CPU %, App ELB latency, App CPU %, Free cache memory & ELB 5xx & 4xx counts. Generally we find an increase in RDS - Write IOPS directly affects our App ELB latency so avoid expensive product imports during peak times.

Wrap Up

I'll expand on this post more over time. Please reach out to me if you want any further information or if you think we can improve something. Best of luck!